When data gives the wrong solution

Article Update - It appears the story of Abraham Wald creating a diagram of the plane is not true. According to Tim Harford in The Data Detective (p. 110 of the hardcover edition), Wald “produced a research document full of complex technical analysis.” But never created a plane diagram like the one shown on this post. Survivor bias is real, but the specifics of this story, while originally sourced, have not held up. Part of the issue is that Wald died in a plane crash in 1950. His research was declassified many years later and likely misunderstood. I’m leaving up the post, but I wanted to update it for full transparency. - Trevor

During World War II, researchers at the Center for Naval Analysis faced a critical problem. Many bombers were getting shot down on runs over Germany. The naval researchers knew they needed hard data to solve this problem and went to work. After each mission, the bullet holes and damage from each bomber was painstakingly reviewed and recorded. The researchers poured over the data looking for vulnerabilities.

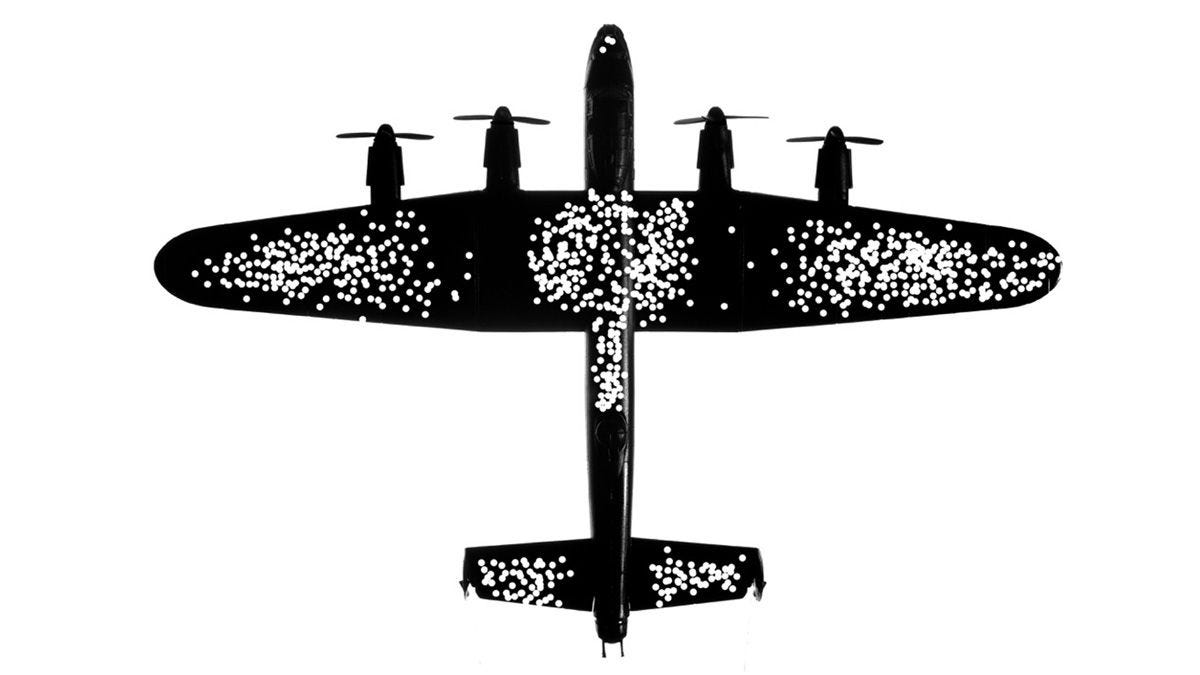

The data began to show a clear pattern (see picture). Most damage was to the wings and body of the plane.

The solution to their problem was clear. Increase the armor on the plane's wings and body.

But there was a problem. The analysis was completely wrong.

Before the planes were modified, a Hungarian-Jewish statistician named Abraham Wald reviewed the data. Wald had fled Nazi-occupied Austria and worked in New York with other academics to help the war effort.

Wald's review pointed out a critical flaw in the analysis. The researchers had only looked at bombers who’d returned to base.

Missing from the data? Every plane that had been shot down.

But the research wasn’t a wasted effort. These surviving bombers rarely had damage in the cockpit, engine, and parts of the tail. This wasn’t because of superior protection to those areas. In fact, these were the most vulnerable areas on the entire plane.

The researchers’ bullet hole data had created a map of the exact places where the bomber could be shot and still survive.

With the new analysis in hand, crews reinforced the bombers' cockpit, engines, and tail armor. The result was fewer fatalities and greater success in bombing missions. This analysis proved so useful that it continued to influence military plane design up through the Vietnam War.

This story is a vivid example of survivor bias. Survivor bias is when we only look at the data of those who succeed and exclude those who fail.

Survivor bias is all around us, especially in the media. You read articles about entrepreneurs who risked everything financially and are now a success. But no one profiles the hundred other entrepreneurs who followed the same strategy and went bankrupt.

Or consider the business classic, Good to Great, which profiled successful companies and the characteristics that made them “great.” But what about all the companies that failed but also had “Level 5 Leaders” and “the right people on the bus”? The analysis excludes these companies “missing from the data.”

The takeaway:

When solving a problem, ask yourself if you only look at the ‘survivors.'

Your solution might not be in what is there but what is missing.

Notes:

For more on this story see Black Box Thinking by Matthew Syed 2015. New York: Penguin Random House. pp 33-37.